Why is data validation crucial for long-term data success

Reading Time: 4 minutes

According to Experian, 95% of business leaders report a negative impact on their business due to poor data quality. It shows the importance of data validation as a critical step to ensure a smooth data workflow. Any inconsistencies in data at the beginning of the process may impact the final results, making them inaccurate. Therefore, checking the accuracy and quality of data before processing it is extremely important.

But first things first, what is data validation; it is an essential step in the data handling task to create data that is consistent, accurate, and complete to prevent data loss and errors. It allows users to check that the data they are dealing with is valid by means of end-to-end testing such as testing for data accuracy, data completeness, and data quality.

While validation is a critical step, it is often overlooked. Businesses can perform various types of validation depending on the constraints and objectives. This article will discuss the importance of data validation, approaches to carry data validation, benefits, challenges, and more.

Importance of Data Validation

Data validation provides accuracy, cleanness, and completeness to the dataset by eliminating data errors from any project to ensure that the data is not corrupted. While data validation can be performed on any data, including data within a single application such as Excel creates better results. Inaccurate and incomplete data may lead the end-users to lose trust in data.

Data validation is essentially a part of the ETL process (Extract, Transform, and Load), which involves moving the source database to the target data warehouse. In doing so, performing data validation is required to enhance the value of the data warehouse and the information stored there.

Various data validation testing tools, such as Grafana, MySql, InfluxDB, and Prometheus, are available for data validation.

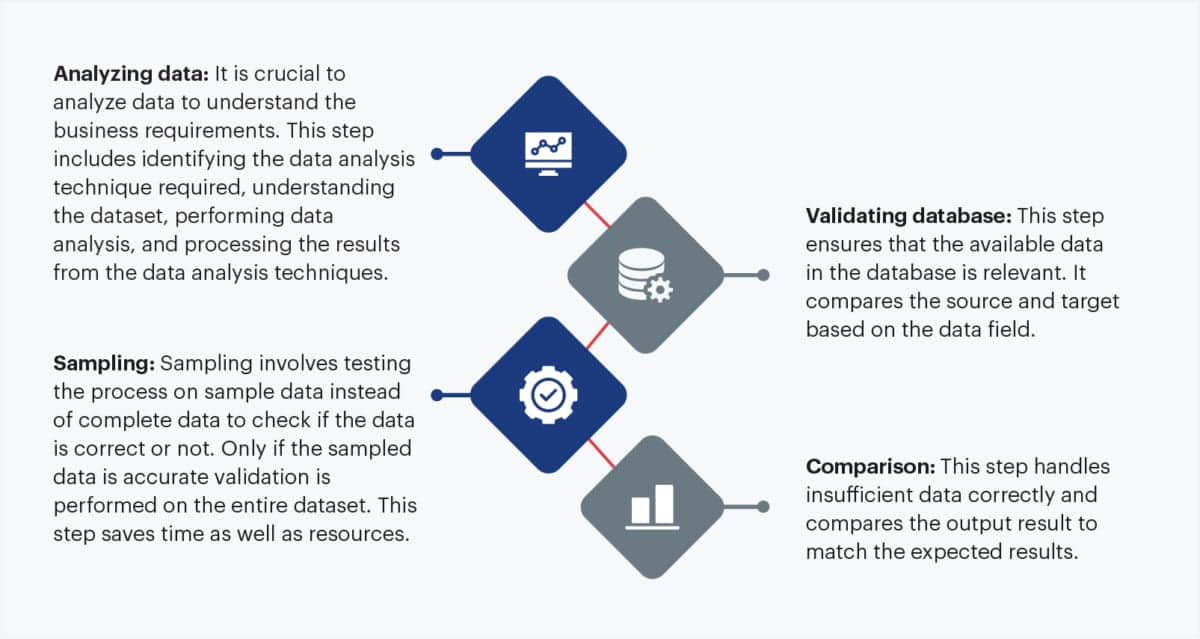

Data Validation Process

The data Validation process consists of the following steps:

Approaches and Techniques

Data validation techniques are crucial for ensuring the accuracy and quality of data. It also ensures that the data collected from different resources meet business requirements. Some of the popular data validation tools are:

- Validation by Scripting: In this method validation process is performed through the scripting language such as Bash or Python, where the entire script for the validation process is written. For example, creating CSV/XML files needs sources and table names, columns, and target database names for comparison. It then uses programming languages on the CSV/XML file to provide output. However, it is a time-consuming process and requires verification.

- Validation by Grafana: Data validation can also be done on the Grafana dashboard by creating a comparison dashboard to fetch data from the desired database. And it can be shown in the form of a table/graph.

- Data Validation in Excel: Data validation in Excel could be performed by applying required formulas on the cells. While it is a manual task and may be time-consuming, many organizations widely accept it to perform data validation tasks.

Benefits

Data Validation ensures that the data collected is accurate, qualitative, and healthy. It also makes sure that the data collected from different resources meet business requirements. Some benefits to Data Validation are:

- It ensures cost-effectiveness because it saves time and money by making sure that the datasets collected and used in processing are clean and accurate

- It is easy to integrate and is compatible with most processes.

- It ensures that the data collected from different sources — structured or unstructured — meet the business requirement by creating a standard database and cleaning dataset information.

- With increased data accuracy, it ensures increased profitability and reduced loss in the long run.

- It also provides better decision-making, strategy, and enhanced market goals.

Challenges

- Validating the data format can be extremely time-consuming, especially when dealing with large databases, and intend to perform the validation manually. However, sampling the data for validation can help to reduce the time needed.

- Validating the data can be challenging because data may be distributed in multiple databases across the project.

- Data validation for a dataset with a few columns seems simple. However, when the number of columns in datasets increases, it becomes a huge task.

In Conclusion

The data validation process is an important step in data and analytics workflows to filter quality data and improve the efficiency of the overall process. It not only produces data that is reliable, consistent, and accurate but also makes data handling easier. Companies are exploring various options such as automation to achieve validation tests that are easy to execute and in line with current requirements.

About author

Abhijeet Kumar is an Associate DevOps Engineer at Sigmoid. He enjoys exploring new technologies and his areas of interest are DevOps tools, Big Data and Bash Scripting. When not working, he likes to cook, play badminton and explore different cultures.

Featured blogs

Subscribe to get latest insights

Talk to our experts

Get the best ROI with Sigmoid’s services in data engineering and AI

Featured blogs

Talk to our experts

Get the best ROI with Sigmoid’s services in data engineering and AI