Best practices for adopting multi-cloud strategy in your organization

Reading Time: 8 minutes

The era of cloud dominance is here, and businesses are in the race to harness its power. Today, 85% enterprises are projected to operate in multi-cloud environments, capitalizing on the agility, resilience, and innovation these setups offer.¹ However, this paradigm shift is not without its challenges—complexity in integration, cost overruns, and security concerns often derail cloud strategies.

For organizations, multi-cloud is more than just a technology choice—it’s a strategic imperative. The approach empowers businesses to:

- Avoid vendor lock-in, ensuring flexibility and bargaining power.

- Mitigate risks of single-point failures, enhancing system uptime and reliability.

- Access specialized tools, using the best services each cloud provider offers for critical use cases.

Multi-cloud adoption demands sophisticated management strategies to deal with fragmented ecosystems, varying compliance norms, and diverse pricing structures. Understanding these dynamics is crucial for organizations looking to gain a competitive edge.

Multi cloud strategy vs hybrid cloud – The key differences

Both these terms refer to enterprise cloud deployments involving the integration of more than one cloud platform. There is also a significant difference in the infrastructure setup of these cloud deployments.

Understanding what is a multi-cloud strategy is key to distinguishing its unique advantages. In a multi-cloud architecture, an organization has the liberty to leverage multiple cloud services delivered by multiple providers. In this setup, multiple cloud solutions are often aligned to multiple processes to drive best-of-breed results and reduce cases of vendor lock-in. For instance, the needs of the sales and finance function are often starkly different from the needs that an R&D function would have. And, it is here where a multi cloud strategy for cloud architects can help companies drive efficiencies by aligning standalone cloud solutions to meet process-specific requirements more effectively.

This model allows companies to reduce dependencies on a single cloud provider. That way, it becomes easier to control costs and enhance operational flexibility. Multicloud strategy across organizations often include a combination of multi-public cloud vendor platforms such as Amazon Web Services (AWS), Microsoft Azure, Google Cloud Platform (GCP), and IBM. On the other hand, the hybrid cloud also combines public and private clouds for the same purpose, but it differs from multi-cloud on the following grounds:

- Hybrid cloud deployments leverage public and private clouds. However, the deployment strategy of multi-cloud involves multiple public clouds and virtual and physical cloud infrastructure in private clouds.

- In a multi-cloud infrastructure, separate cloud services are aligned to separate processes. In a hybrid cloud setup, however, the components typically work in unison.

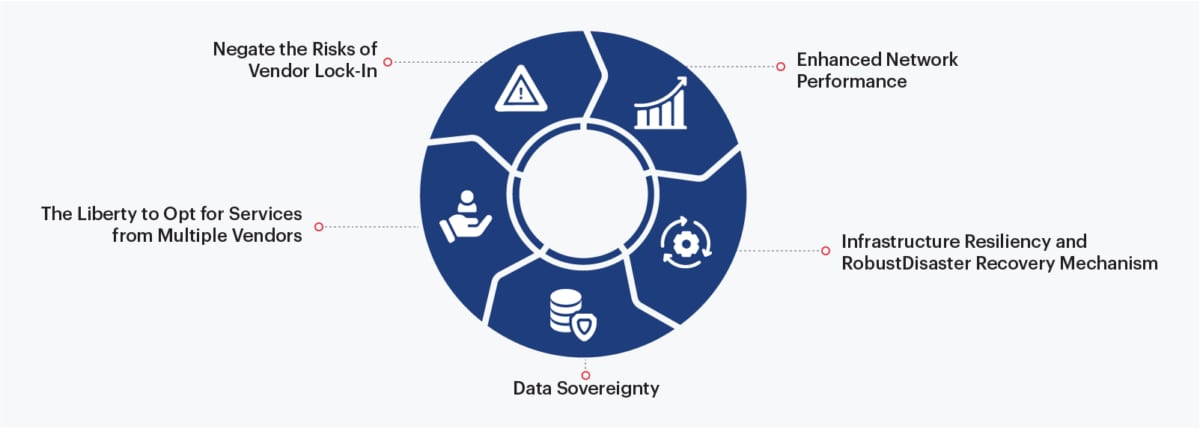

Benefits of multi cloud strategy for cloud infrastructure

Though the benefits of the cloud are tangible irrespective of the infrastructure that a company embraces, several aspects make a multi-cloud setup beneficial in the long run. Nearly 85% organizations leveraging a multi-cloud approach report improved system resilience and reliability.² Now, some key factors have resulted in this increased push for multi-cloud adoption, such as:

1. The Liberty to Opt for Services from Multiple Vendors

Companies cannot expect to get the best-of-breed cloud services from a single vendor. It is here where multi-cloud adoption can help them select the best services for specific requirements from multiple vendors. For instance, a company might go with a particular cloud vendor to run fully managed (PaaS) workloads but leverage a different cloud provider’s robust AI and ML services to drive standalone analytics initiatives within processes. A multi-cloud infrastructure infuses flexibility in the cloud infrastructure, allowing companies to optimize their analytics workloads better. Data analytics involves a meticulous data engineering process such as data discovery, data integration, data processing, data warehousing, and more. Shifting to a multi-cloud infrastructure provides a company with the opportunity to select specific cloud tools to manage each of these processes.

2. Negate the Risks of Vendor Lock-In

A multi-cloud infrastructure allows companies to opt for cloud services from multiple vendors and helps distribute workloads on multiple cloud platforms depending on requirements while reducing vendor dependency. With multi-cloud strategy, companies have the option to migrate to a different cloud service provider depending on changes in strategy, pricing models, or service level agreements (SLAs).

3. Enhanced Network Performance

By allowing companies to extend their networks to multiple cloud providers, a multi-cloud infrastructure leverages fast and low-latency connections to enhance application response times and deliver a satisfactory user experience. In this setup, companies can opt for cloud services from a provider based on their proximity to the provider regions for maximum speed and network uptime.

4. Infrastructure Resiliency and RobustDisaster Recovery Mechanism

Multi-cloud environments make it easier for companies to allocate redundant workloads across different cloud platforms to manage disaster recovery effectively. In a multi-cloud setup, companies can create workload replicas across two or three cloud platforms. During downtime, anyone of these replicas can continue to function.

5. Data Sovereignty

A multi-cloud setup makes it easier for companies to comply with data sovereignty laws and regulations because it allows data storage within the same country or region from where it was collated.

The challenges of a multi cloud architecture

While the benefits offered by a multi-cloud environment make it a compelling proposition for companies looking to operate a diverse range of applications, it’s important to understand why use multi cloud strategy as part of your operational approach. While it offers flexibility and scalability, a multi cloud strategy can turn out to be highly complex if not properly managed. Multi-cloud environments come with some inherent challenges that companies need to be prepared for. For instance:

1. Escalating Costs

With multiple cloud providers, companies may find it challenging to manage ad-hoc service costs, subscription costs, etc. Cloud services providers often come with distinct billing and subscription models that can become too complex to manage. Without proper cost management and consumption plan, companies may lose a significant amount of money due to wasted resources.

2. Complex Management

In a multi-cloud environment, companies need to monitor application setup and configuration across diverse cloud platforms constantly. Managing applications, especially when application components are stored in different clouds and workloads are scattered across cloud resources, can be challenging. Big data management and operating a data pipeline in the best of circumstances where data sources are scattered remains an arduous task for many companies. Now, when data teams are asked to maintain data pipelines in an environment where huge clusters of constantly changing diverse data sets across multiple clouds and on-prem platforms, this essentially becomes a nightmare. To ensure that a multi-cloud infrastructure performs to its full potential, companies need to train IT adequately staff about all the cloud platforms implemented and create necessary integration channels wherever required. Ultimately successful management of multi-cloud infrastructure boils down to how well a company evaluates the key attributes of the cloud solutions at its disposal and aligns the same to serve specific needs.

3. Security Risks

Security requirements are expected to become more stringent going forward as the complexities of multi-cloud infrastructure increase. Cloud service providers often come with adequate security services, which may become inadequate as data and workloads get disseminated across cloud platforms. To devise a resilient security mechanism, companies need to embrace an end-to-end approach to security where proper controls and stringent access rights are implemented at the right touchpoints.

4. Steep Learning Curve

Leading cloud service providers regularly introduce new services and upgrades, which makes the multi-cloud space highly dynamic. Business leaders and data teams need to stay abreast with these constant updates to ensure that end-user adoption of a particular cloud solution is seamless. Rapidly changing cloud technology and regular updates coupled with talent crunch can make it difficult for a company to derive maximum value from its multi-cloud initiative.

5. Risk of Non-Compliance

Companies need to comply with different data regulations such as PCI, PII, GDPR, and HIPAA in a multi-cloud environment. Without a robust compliance mechanism in place, companies can become vulnerable to the risks of data theft and loss.

Orchestrating a Robust Multicloud Adoption Strategy – Best Practices

With the right adoption strategy, companies stand a better chance of negating the challenges of multi-cloud environments. The following are some of the best practices that companies need to follow to ensure that their multi-cloud infrastructure delivers the best outcomes:

- Data governance: While devising a multi-cloud strategy, companies need to focus on creating a strong data governance program. That way, every end-user can have complete visibility on the location of data, irrespective of the cloud platform where it is stored.

- Workload distribution: Another important aspect that companies need to keep in mind while charting out their multi-cloud strategy is integration and management. Companies must have well-thought-out strategies for data pipeline monitoring, ITSM integration, patch management, and application lifecycle management. Standardizing the consumption patterns based on requirement and cloud service type is also essential. For instance, companies can choose Microsoft Azure for .Net application development and at the same time leverage data labs on Google cloud to build analytics capabilities. That way, companies can effectively distribute workloads across multiple cloud platforms and, in the process, reduce wait time, enhance application response, avoid service level agreement violations and reduce chances of downtime.

- Integrated systems: To reap tangible returns from a multi-cloud environment, companies need to develop an integrated system of resources. Integration makes it easier for companies to constantly monitor disparate cloud networks, schedule maintenance for applications, and identify potential threats to the cloud infrastructure.

- IPaas: By leveraging an IPaas (Integration Platform as a service) solution, allows integration of cloud services from multiple vendors seamlessly. An IPaas solution can serve as a backbone for a multi-cloud enterprise architecture by enhancing system connectivity between various cloud environments and allowing cloud vendors to analyze, assess and synchronize data securely and quickly. It can also simplify integration processes with reusable data modules to facilitate practical self-service analysis.

- Containerization: In order to ensure seamless migration of workloads between cloud platforms and easy application maintenance, organizations need to bundle specific applications within containers. This packaging significantly enhances the portability of the application and its workloads within diverse runtime environments. Containers can prove to be particularly beneficial for microservices environments, where an application is developed in a way where its components are further broken down into sub-components for easy portability.

- Security: Implementation of proper security protocols is key to negate known and unknown threats in a multi-cloud environment. The right security posture will entail complete visibility and control of all applications across the cloud platforms with robust access control, data security, network security, application security, and audit trails.

In Conclusion

Cloud as a technology has rapidly evolved from private to hybrid and now to multi-cloud. The shift answers critical questions like why use a multi-cloud strategy and why is multi-cloud important, offering businesses the flexibility to select best-of-breed services, avoid vendor lock-in, and optimize diverse application workloads. Given these benefits significantly outweigh the inherent challenges of a multi-cloud environment, it is poised to become the de facto standard for cloud deployments.

However, to capitalize on the opportunities presented by multi-cloud, companies must ensure robust management mechanisms are in place. With data scattered across public, private, hybrid, and on-premise platforms, businesses must adequately secure and manage this data irrespective of its environment. Adopting a multi-cloud strategy empowers organizations to achieve these goals while unlocking operational resilience and strategic agility.

About author

Gunasekaran S is the Chief of Staff, Data Engineering at Sigmoid with over 20 years of experience in consulting, system integration, and big data technologies. As an advisor to customers on data strategy, he helps in the design and implementation of modern data platforms for enterprises in the Retail, CPG, BFSI, and Travel domains to drive them toward becoming data-centric organizations.

2. https://www.techrepublic.com/article/85-of-organizations-will-be-cloud-first-by-2025-says-gartner/

Featured blogs

Subscribe to get latest insights

Talk to our experts

Get the best ROI with Sigmoid’s services in data engineering and AI

Featured blogs

Talk to our experts

Get the best ROI with Sigmoid’s services in data engineering and AI